The Population Health VP's Guide to RPM Vendor Evaluation

A research-based guide to population health VP RPM vendor evaluation, covering integration, adherence, staffing, reimbursement, and scale.

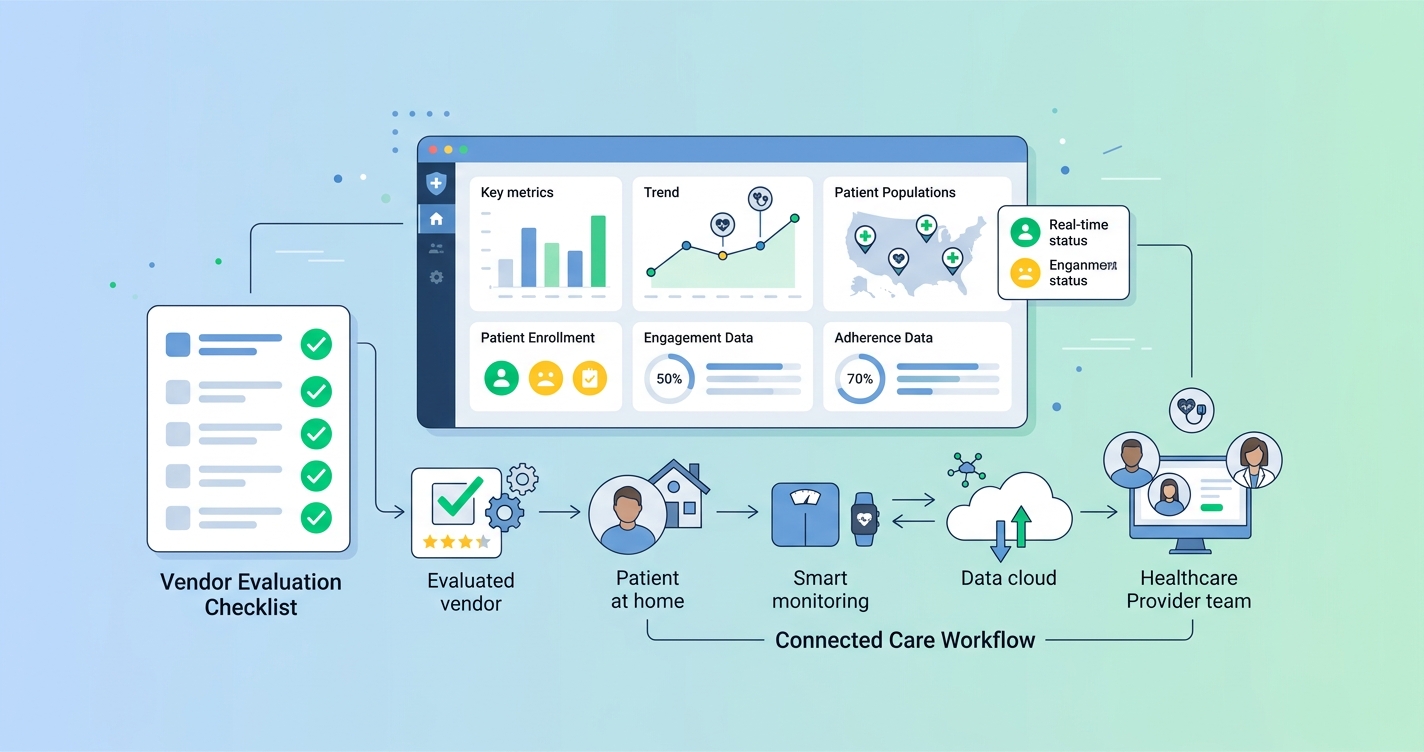

Population health VP RPM vendor evaluation is not really a software shopping exercise. It is a care-model decision with downstream effects on staffing, reimbursement, clinical response times, and whether patients actually stay engaged long enough for the program to matter. Health systems can buy a polished dashboard and still end up with weak enrollment, fragmented data, and support queues full of device problems. That is why the best evaluation process starts with operating reality, not feature lists.

"RPM interventions demonstrated positive outcomes in patient safety and adherence." — Si Ying Tan, Jennifer Sumner, Yuchen Wang, and Alexander Wenjun Yip, npj Digital Medicine (2024)

Population health VP RPM vendor evaluation starts with program fit

A population health leader usually inherits competing pressures at the same time: reduce readmissions, expand chronic disease surveillance, support value-based contracts, and avoid creating another labor-heavy pilot that never scales. That makes vendor evaluation more strategic than it sounds.

The question is not just whether a platform can collect vitals. It is whether the vendor's model matches the care pathway the health system is actually trying to run. David Levine and colleagues showed in a randomized clinical trial published in JAMA Network Open that remote physician visits in hospital-at-home care were noninferior to in-home physician visits for adverse events and patient experience. I keep coming back to that study because it captures the real point of RPM infrastructure: scale only matters if the operating model stays clinically credible.

For population health teams, that usually means evaluating five issues before anything else:

- how much patient friction the program introduces

- how quickly data reaches the clinical team

- how well the platform fits the EHR and care-management workflow

- whether staffing assumptions are realistic at scale

- whether the economics work after the pilot period ends

Comparison table: what population health VPs should compare first

| Evaluation dimension | Questions to ask | Why it matters for population health | |---|---|---| | Patient adherence | How many steps does a patient need to complete? Is hardware shipped, paired, charged, and replaced? | The best signal is still useless if participation collapses after week one | | Clinical workflow | Who reviews alerts, trends, and exceptions? What is the escalation path? | RPM fails fast when alerts arrive without clear ownership | | EHR integration | Does data flow into Epic, Cerner, or care-management systems in a usable format? | Fragmented workflows add charting burden and reduce actionability | | Device and logistics burden | How much inventory, onboarding, and technical support is required? | Logistics costs can erase the business case in broad population programs | | Reimbursement support | Does the vendor support documentation, billing workflows, and operational reporting? | Financial sustainability matters once grant or pilot funding ends | | Health equity and access | Can the model work for low-connectivity, low-literacy, or underserved populations? | Population health programs break when the workflow only works for digitally confident patients | | Reporting and outcomes | Can the platform report engagement, utilization, escalation, and outcome trends clearly? | Leaders need evidence for expansion, budgeting, and payer conversations |

Why adherence belongs near the top of the scorecard

Plenty of RPM procurement conversations still treat adherence as a secondary issue. That is backward.

Tan, Sumner, Wang, and Yip reviewed 29 studies across 16 countries and found that RPM interventions improved safety and adherence overall, while also showing a downward trend in admissions, readmissions, and outpatient utilization. That does not mean every platform gets those results automatically. It means the value proposition of RPM is tightly tied to whether people keep using it.

This is where a population health VP needs to be blunt during evaluation. If the vendor depends on multiple devices, complex setup, or heavy caregiver support, the platform may look strong in a demo and weak in the field. A lower-friction model often creates more usable data simply because more patients complete the monitoring step.

A practical adherence review should cover:

- enrollment completion rate

- 30-day and 90-day participation trends

- dropout reasons by patient segment

- support tickets per 100 patients

- average time from outreach to first successful reading

- whether the workflow works on devices patients already own

The health-equity angle matters too. The California Telehealth Resource Center's RPM Vendor Selection Toolkit recommends checking smartphone compatibility, secure data handling, configurable alerts, service-level commitments, and billing support. That sounds operational because it is. Population health programs do not fail from lack of vision. They fail on small workflow frictions that add up.

Industry applications

Chronic disease management programs

Hypertension, heart failure, diabetes, and COPD programs usually need repeat engagement more than flashy analytics. In these pathways, vendor evaluation should focus on sustained monitoring behavior, exception management, and whether nurses can intervene before deterioration becomes an ED visit.

Hospital-at-home and post-discharge pathways

Hospital-at-home programs expose weak vendor assumptions quickly. A 2024 systematic review in the Journal of the American Medical Directors Association identified 21 randomized trials of remote vital sign monitoring in admission-avoidance hospital-at-home models and found reduced readmission in the automated monitoring subgroup. That is important, but the authors also pointed to reporting gaps and uneven evidence. In other words, buyers should not assume every monitoring model is equally mature.

Safety-net and broad population outreach

Programs aimed at underserved populations need a harder look at language access, connectivity, and device burden. If enrollment depends on broadband stability, Bluetooth troubleshooting, or frequent hardware replacement, the vendor may be a poor fit for broad population management even if the clinical concept is sound.

The operating model matters more than the feature list

I think this is where a lot of vendor evaluations go sideways. Teams compare alert types, dashboards, and device catalogs before asking what the care team will actually have to do each morning.

A sound RPM evaluation should map the daily operating model:

- who enrolls the patient

- who trains the patient or caregiver

- who monitors incoming data

- what thresholds create outreach or escalation

- how nights, weekends, and overflow are covered

- how nonadherence is tracked and addressed

If a vendor cannot describe this clearly, the platform is probably still selling possibility rather than a working delivery model.

KLAS reporting on the RPM market has reinforced the same basic point: health systems care about outcomes, integration, and customer experience, not just remote data capture. For a population health VP, that means reference calls should focus less on whether users "like" the platform and more on whether the program produced sustainable staffing ratios, measurable engagement, and clean reporting for leadership.

Current research and evidence

Several sources help frame a more evidence-based RPM vendor evaluation process.

First, Tan, Sumner, Wang, and Yip's 2024 systematic review in npj Digital Medicine is one of the clearest summaries of RPM during transitions from hospital to home. The review included 29 studies and found positive results for safety and adherence, with broader cost-related trends moving in the right direction.

Second, David Levine, Michael Frieze, Bruce Leff, and colleagues reported in JAMA Network Open that remote physician visits for hospital-level care at home were noninferior to in-home physician visits for adverse events and patient experience. That is a useful reminder that scalable virtual models can work, but only when workflow design is sound.

Third, the 2024 hospital-at-home systematic review in JAMDA found that automated monitoring approaches were associated with lower readmission in the subgroup analysis. That should catch any population health executive's attention, though the authors also noted gaps in evidence and reporting quality.

Finally, practical toolkits such as the California Telehealth Resource Center's RPM vendor framework are useful because they force procurement teams to score security, interoperability, alert configuration, and support commitments instead of relying on vague sales language.

The future of RPM vendor evaluation will be less about devices and more about friction

The next few years will probably reward vendors that remove operational drag. Population health teams already know how to launch pilots. The harder challenge is building RPM programs that survive finance reviews, scale across service lines, and keep patients participating without an army of support staff.

That points to a few likely shifts:

- more scrutiny on adherence and activation metrics before contract expansion

- tighter demands for EHR integration and usable exception workflows

- stronger preference for lower-friction monitoring models where appropriate

- more segmentation, with hardware-heavy models reserved for pathways that truly need them

That last point matters. The best vendor is not the one with the longest device catalog. It is the one whose workflow matches the patient population, staffing model, and financial reality of the health system.

Frequently asked questions

What should a population health VP look for in an RPM vendor?

The short answer is program fit. Focus on adherence, workflow ownership, EHR integration, staffing assumptions, reimbursement support, and whether the model can scale across real patient populations.

Why is adherence so important in RPM vendor evaluation?

Because RPM only works when patients keep participating. A platform with modest technical ambition but low friction can outperform a more complex model if it produces more consistent engagement.

How should health systems compare RPM vendors for hospital-at-home programs?

They should examine alerting workflows, escalation coverage, monitoring automation, and how much labor the program requires per patient. Hospital-at-home models expose weak staffing and integration assumptions very quickly.

Are device logistics part of the vendor evaluation process?

Absolutely. Shipping, replacements, charging, pairing, and troubleshooting can become a major hidden cost in population-scale RPM deployments.

If your team is weighing lower-friction RPM architecture, solutions like Circadify fit the broader move toward software-first monitoring. For related reading, see How to Scale Hospital-at-Home Programs Without Adding Logistics Staff and How Federally Qualified Health Centers Use RPM for Underserved Populations.